How big a problem were Y2K bugs? Here we are over a quarter century later, and I still see folks arguing about it online.

For those unaware, back in the 20th century computer memory and storage space were dear. Many, many tricks were used to save a few bytes. One such was to only store two digits for the year so, for example, 1985 would simply be stored as “85”. It was reasonable: I’m sure people coding those early programs thought “surely by the end of the century this code will have long since been replaced by something that can handle the new century.”

My first full-time IT job was writing mortgage loan processing software. That was in 1975 and, believe me, none of us thought that the code we were writing would still be in use in 1999. Mortgage loans, however, typically last 30 years in the US, so we were already five years in to having to handle dates beyond 2000 because our software had to be able to handle dates throughout the projected lifetime of the loan. I’m pretty sure most all our code was Y2K compliant. To write any code that wasn’t was to be inviting disaster when somebody re-used your fancy date manipulation routines to handle dates at or near the end of the loan’s life.

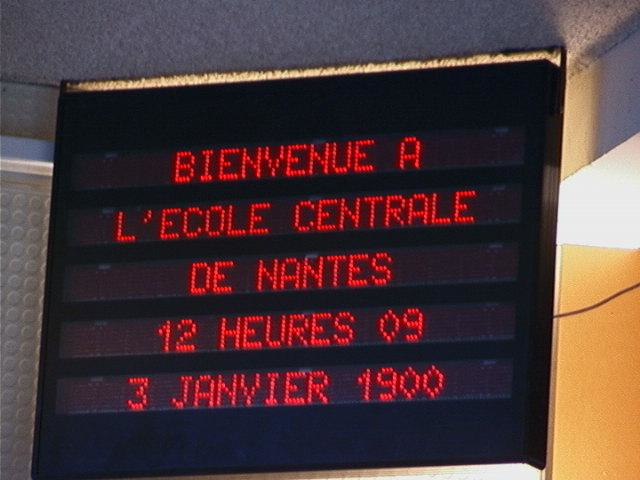

I was far, far away from the mortgage loan business when Y2K happened, and I did actually have a small bug appear. I had written an electronic medical record program in Perl, and Perl tends to store the year as the number of years since 1900. Since neither Perl nor computing were around before 1910, everything worked fine if, when printing a year, you simply output a literal “19” followed by the integer year. The right way would be to display the integer “1900+$year” but, well, somebody (maybe even I) didn’t do that. Thus when I came to work on the first business day of 2000, a few places where the EMR printed calendars of appointments it listed the year as “19100”. Ugly, but nobody died and I think it took me about ten minutes to find the offending code and fix it.

Aside from some amusing signs such as the above, I don’t remember there being any dramatic date-related incidents after the start of the year, despite predictions of airplanes falling from the sky, public utilities failing, nuclear weapons deploying, elevators crashing to the basement, and operating room equipment failing.

So what are the arguments about? One side claims that the Y2K nothingburger was the end result of millions of IT professionals working overtime to find and correct Y2K bugs. There were, in fact, many personnel and huge budgets devoted to preventing Y2K.

The other side claims that it simply wasn’t that big of a problem in the first place, but rather an excuse to inflate IT budgets.

Of course, reality was almost certainly somewhere in the middle. Had the world not taken Y2K seriously, I’m certain there would have been much more dramatic fallout than what actually happened. On the other hand, the fact that almost nothing very dramatic actually did happen suggests that Y2K wouldn’t have been a civilization-ending cataclysm left on its own — there just isn’t that big a chance that the Y2K mitigators did that perfect a job.

So hats off to those who toiled to keep us alive going into the new millennium (or was that actually 2001?), but also to the coders of the late 20th century who wrote code robust enough to survive the data problem without revision.

—2p